Blog

Research notes and insights on my published and ongoing work.

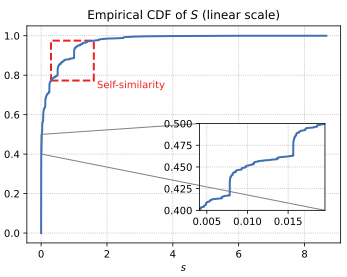

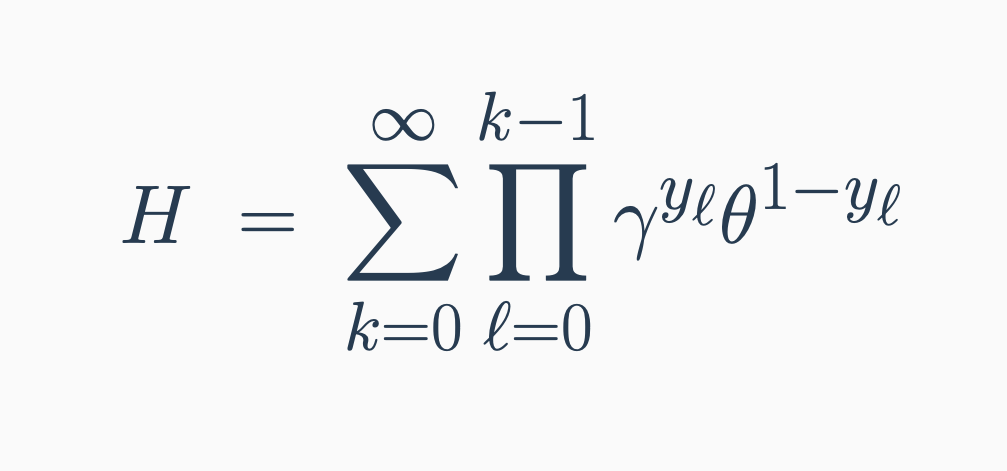

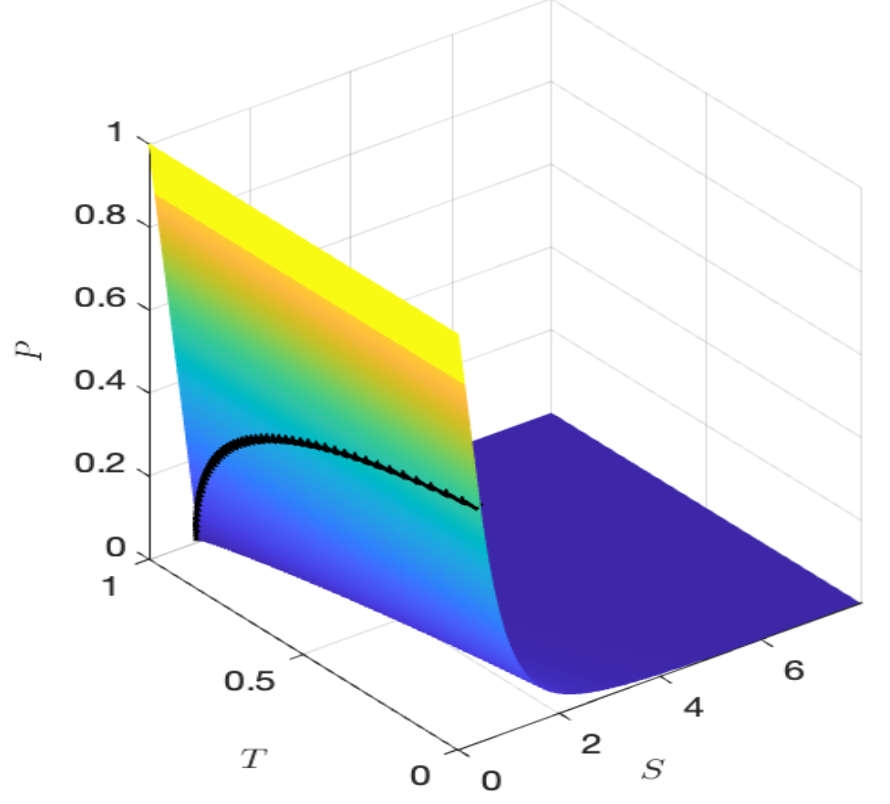

Exact lower bounds on the non-convergence probability of probabilistic direct search

We study the distribution of a random series arising from probabilistic direct search, revealing power-law tails, fractal structure, and exact non-convergence probability bounds.

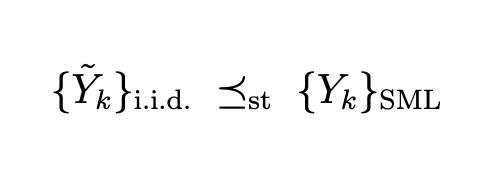

Notes on stochastic orders and submartingale-like assumptions for optimization

We show that dependent Bernoulli sequences under submartingale-like assumptions are stochastically dominated by i.i.d. benchmarks, enabling direct transfer of classical concentration inequalities.

OptiProfiler: A Platform for Benchmarking Optimization Solvers

We present OptiProfiler, an automated and flexible benchmarking platform for optimization solvers, with a focus on derivative-free optimization.

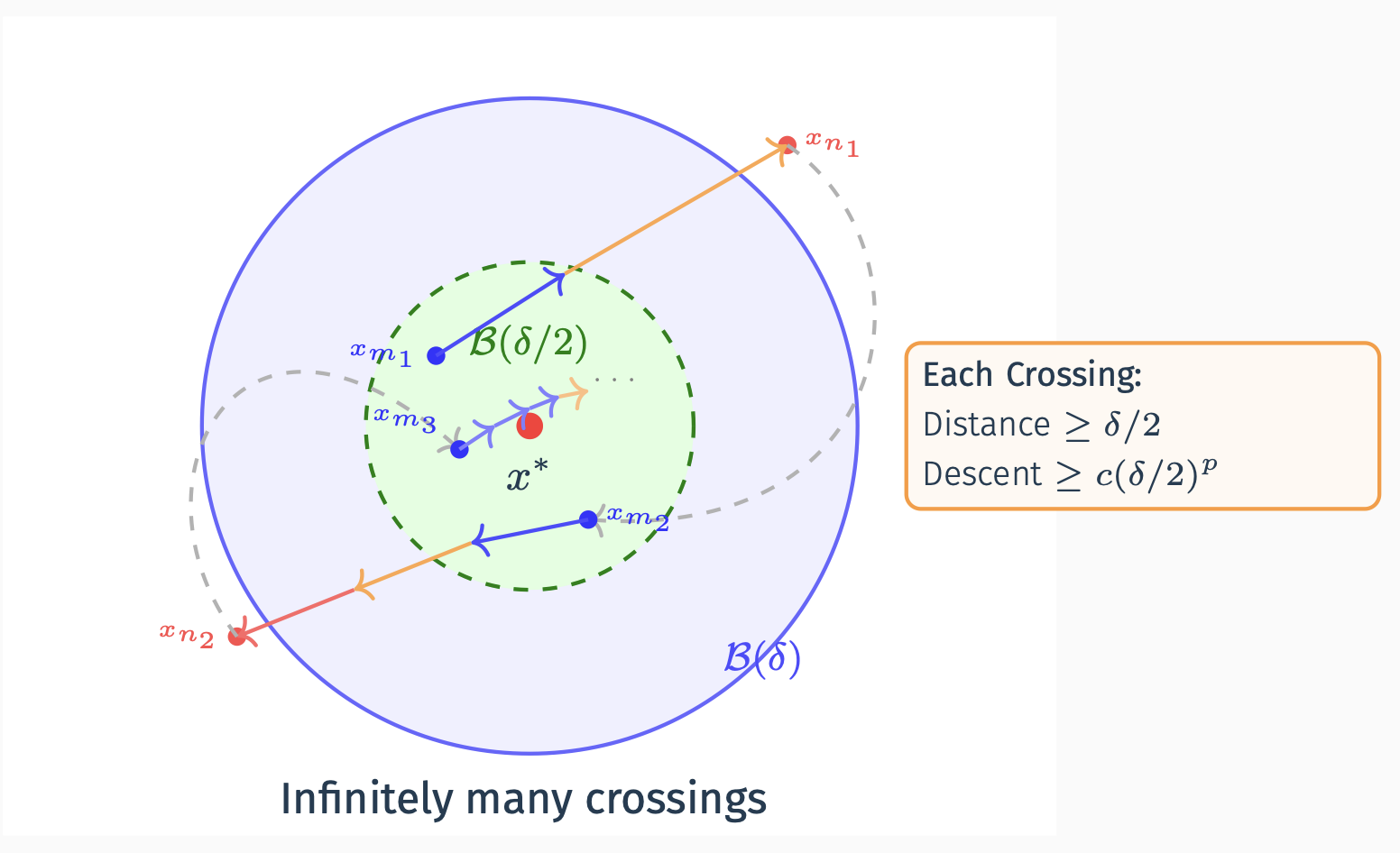

A unified series condition for the convergence of derivative-free trust region and direct search

We propose a unified series condition that governs the convergence of both trust-region and direct-search methods in derivative-free optimization.

Gradient convergence of direct search based on sufficient decrease

We prove that direct search achieves full gradient convergence, strengthening the classical liminf result to the full limit.

Non-convergence analysis of probabilistic direct search

We establish the non-convergence theory for probabilistic direct search, answering the question: when will your algorithm fail to converge?

Error analysis of finite difference scheme for American option pricing under regime-switching with jumps

We provide a rigorous error analysis for the finite difference scheme applied to American option pricing under regime-switching models with jumps, establishing convergence rates and stability conditions.